In [1]:

Copied!

import tensorflow as tf

print("TensorFlow version:", tf.__version__)

import tensorflow as tf

print("TensorFlow version:", tf.__version__)

TensorFlow version: 2.3.0

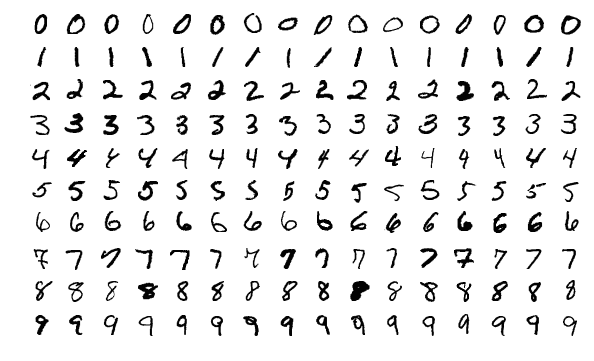

Loading test database (MNIST)

In [2]:

Copied!

mnist = tf.keras.datasets.mnist

(x_train, y_train), (x_test, y_test) = mnist.load_data()

x_train, x_test = x_train / 255.0, x_test / 255.0

mnist = tf.keras.datasets.mnist

(x_train, y_train), (x_test, y_test) = mnist.load_data()

x_train, x_test = x_train / 255.0, x_test / 255.0

Building a simple model

In [3]:

Copied!

model = tf.keras.models.Sequential([

tf.keras.layers.Flatten(input_shape=(28, 28)),

tf.keras.layers.Dense(128, activation='relu'),

tf.keras.layers.Dropout(0.2),

tf.keras.layers.Dense(10)

])

model = tf.keras.models.Sequential([

tf.keras.layers.Flatten(input_shape=(28, 28)),

tf.keras.layers.Dense(128, activation='relu'),

tf.keras.layers.Dropout(0.2),

tf.keras.layers.Dense(10)

])

In [4]:

Copied!

model.summary()

model.summary()

Model: "sequential" _________________________________________________________________ Layer (type) Output Shape Param # ================================================================= flatten (Flatten) (None, 784) 0 _________________________________________________________________ dense (Dense) (None, 128) 100480 _________________________________________________________________ dropout (Dropout) (None, 128) 0 _________________________________________________________________ dense_1 (Dense) (None, 10) 1290 ================================================================= Total params: 101,770 Trainable params: 101,770 Non-trainable params: 0 _________________________________________________________________

In [5]:

Copied!

predictions = model(x_train[:1]).numpy()

predictions

predictions = model(x_train[:1]).numpy()

predictions

Out[5]:

array([[-1.8647462e-01, 5.3670764e-01, -5.1415348e-01, -1.3579503e-01,

-6.0872316e-01, -5.1377362e-01, -3.4768134e-05, 1.7156357e-01,

-3.9986107e-01, 5.4845825e-02]], dtype=float32)

In [6]:

Copied!

tf.nn.softmax(predictions).numpy()

tf.nn.softmax(predictions).numpy()

Out[6]:

array([[0.09152274, 0.18862666, 0.06595077, 0.09628063, 0.05999966,

0.06597582, 0.11028053, 0.13092513, 0.07393608, 0.11650193]],

dtype=float32)

In [7]:

Copied!

loss_fn = tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True)

loss_fn = tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True)

In [8]:

Copied!

model.compile(optimizer='adam',

loss=loss_fn,

metrics=['accuracy'])

model.compile(optimizer='adam',

loss=loss_fn,

metrics=['accuracy'])

In [9]:

Copied!

model.fit(x_train, y_train, epochs=5)

model.fit(x_train, y_train, epochs=5)

Epoch 1/5 1875/1875 [==============================] - 2s 879us/step - loss: 0.2947 - accuracy: 0.9137 Epoch 2/5 1875/1875 [==============================] - 2s 925us/step - loss: 0.1437 - accuracy: 0.9580 Epoch 3/5 1875/1875 [==============================] - 2s 954us/step - loss: 0.1102 - accuracy: 0.9661 Epoch 4/5 1875/1875 [==============================] - 2s 900us/step - loss: 0.0900 - accuracy: 0.9730 Epoch 5/5 1875/1875 [==============================] - 2s 860us/step - loss: 0.0766 - accuracy: 0.9758

Out[9]:

<tensorflow.python.keras.callbacks.History at 0x7f1099f839d0>

In [10]:

Copied!

model.evaluate(x_test, y_test, verbose=2)

model.evaluate(x_test, y_test, verbose=2)

313/313 - 0s - loss: 0.0796 - accuracy: 0.9754

Out[10]:

[0.07960231602191925, 0.9753999710083008]

In [11]:

Copied!

probability_model = tf.keras.Sequential([

model,

tf.keras.layers.Softmax()

])

probability_model(x_test[:5])

probability_model = tf.keras.Sequential([

model,

tf.keras.layers.Softmax()

])

probability_model(x_test[:5])

Out[11]:

<tf.Tensor: shape=(5, 10), dtype=float32, numpy=

array([[2.16737632e-07, 1.00639301e-08, 2.08058896e-06, 3.69298097e-04,

8.35847364e-12, 1.86631244e-08, 9.44293539e-14, 9.99598086e-01,

1.12612099e-07, 3.02279786e-05],

[3.15482773e-09, 3.69438885e-06, 9.99993563e-01, 1.00565217e-06,

3.63748135e-15, 1.18580135e-06, 6.52262599e-07, 3.42703665e-12,

2.77176042e-08, 4.78829631e-15],

[7.48431859e-08, 9.98841465e-01, 3.04258778e-04, 2.81406392e-05,

5.11805338e-05, 7.44846739e-06, 2.18839123e-05, 6.27289061e-04,

1.17799733e-04, 3.43982606e-07],

[9.99946833e-01, 1.73406516e-08, 3.81245036e-06, 3.70854252e-07,

2.12491678e-08, 2.05819515e-06, 2.97118731e-06, 1.24853177e-05,

6.72489975e-08, 3.14617173e-05],

[2.61222317e-06, 1.26015087e-09, 1.62396134e-06, 1.11417165e-07,

9.99176919e-01, 1.55488397e-06, 1.43458340e-06, 1.57523991e-05,

2.67988139e-06, 7.97215558e-04]], dtype=float32)>

In [ ]:

Copied!